Are we nearly there yet?

When to stop testing automated driving functions

by Professor Simon Burton

A question I am often asked in my day job is “How much testing do we need to do before releasing this level 4 automated driving function?” — or variations thereof. I inevitably disappoint my interrogators by failing to provide a simple answer. Depending on my mood, I might play the wise-guy and quote an old computer science hero of mine:

(Program) testing can be used to show the presence of bugs, but never to show their absence! — Edsger W. Dijkstra, 1970.

Sometimes I provide the more honest, but equally unhelpful response: “We simply don’t know for sure”.

The problem is that attempting to demonstrate the safety of autonomous vehicles using road tests alone would involve millions or even billions of driven kilometres. For example, to argue the mean distance between collisions of 3.85 million km (based on German crash statistics) with a confidence value of 95%, a total of 11.6 million test kilometres must be driven without collisions.

Of course, we would also need to integrate these into an iterative build, test, fix, repeat process: every time we change the function or its environment, we would need to start all over again.

Challenges in testing highly automated driving

However, it is not just the statistical nature of the problem that is challenging. Automated driving functions pose specific challenges to the design of test approaches:

- Controllability and coverage: How can we control all relevant aspects of the operational design domain (ODD) and system state in order to test specific attributes of the function and triggering conditions of the environment? And how do we argue that we have achieved coverage of the ODD?

- Repeatability and observability: A range of uncertainties in both the system and its environments lead to major challenges when demonstrating the robustness of the function within the ODD and reproducing failures observed in the field for analysis in the lab. Furthermore, it may not be possible to determine in which state the system was when the failure occurred, either due to system complexity or the opaque nature of the technology.

- Definition of “pass” criteria: Due to the lack of a detailed specification of the required system behaviour under all possible conditions, there are significant challenges in defining the pass/fail criteria for the tests (including a definition of “ground truth”).

A better answer to the question

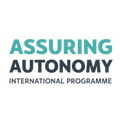

As I have mentioned several times in my blog posts, as safety engineers we typically counteract uncertainties in our safety arguments by using a breadth of evidence. In the case of the verification and validation (V&V) phase of our framework, this means understanding the relative merits of different analysis, simulation and test techniques and then selecting a suitable combination appropriate to the set of quality goals to be argued. The selection of methods and associated fulfilment criteria requires a systematic approach typically referred to as a test strategy or test plan.

V&V methods

What methods are available to us then for performing V&V of automated driving functions with the specific focus on functional insufficiencies?

- Formal verification: Techniques such as symbolic model checking and theorem proving can provide a complete analysis of the entire input space. However, such techniques require very precisely defined criteria, typically do not scale to large or poorly defined input spaces, and can produce inscrutable results. Therefore, their use in this context may be limited to restricted properties of certain functional components such as the behavioural planner but may be beneficial in uncovering edge cases that had not been considered in the specification.

- Simulation: The use of simulation when testing components of an automated driving system addresses the issues of controllability, observability and repeatability by simulating the interfaces of the system or individual components within a controlled, synthetic environment. Simulation can be used to address a number of V&V tasks including:

- simulation of physical properties of sensors to better understand the causes of triggering conditions (e.g. analysis of the propagation of radar signals through various materials)

- simulation of inputs to the sensing and understanding functions (e.g. based on synthetic video images)

- simulation of decision functions based on an abstract representation of the driving situation.

- Vehicle-based tests: The fidelity of the simulation and representativeness of its results must be validated for the results of the simulation to be used as a significant contribution to the safety case and to justify reduced real-world testing. The methods described above can be used to demonstrate the ability of the system to react robustly against known triggering conditions but are less well suited to arguing resilience against unknown triggering conditions. Therefore, some vehicle-based tests will always be required. These may be performed in controlled environments (e.g. test tracks using mockup vehicles and pedestrians) and on the open road.

Each type of method has its place in the overall V&V strategy and is used to gather evidence related to specific system properties. The use of coverage and stopping criteria for each V&V method can help plan effort and estimate the residual failure rate of the system. For example, extreme value theory can estimate failure rates based on observations of the internal system state and “near misses”. Such measures would provide more “countable” events than just relying on actual hazardous situations and thus a more accurate prediction. These estimation models, however, need to be supported by a set of assumptions confirmed through other design arguments and V&V methods.

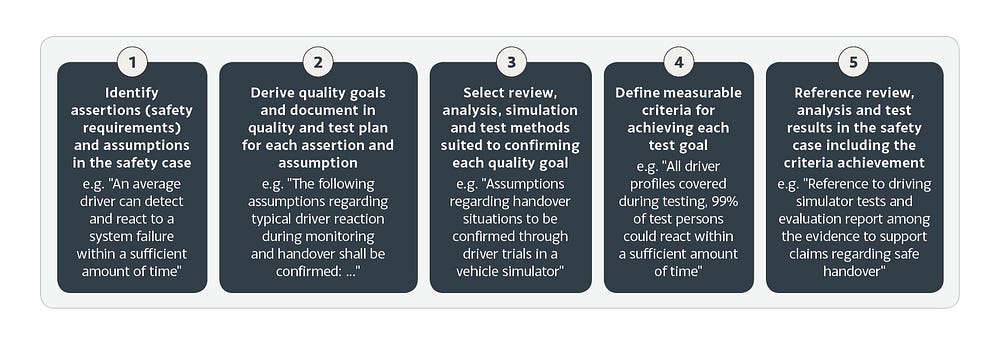

Simulation, analysis and component-level tests are best suited for detecting rare edge cases or testing scenarios too dangerous to reenact in the field. Real-world tests are more likely to detect issues encountered often or as a result of emerging properties of all components of the system.

As mentioned in my other posts, the assurance case framework I propose is an iterative one. This means that the results of V&V should be used to refine the domain analysis and system design. To share and effectively make use of sources of information such as simulation and recorded drive data, a common semantic model is required. This would allow, for example, for unexpected scenarios encountered in the field to be reconstructed within a simulated environment, creating numerous variations of the conditions to better understand the limits of the system and the desired safety properties.

You can download a free introductory guide to assuring the safety of highly automated driving: essential reading for anyone working in the automotive field.

Please also check out my colleague Zaid’s great blog on situation coverage for automated driving using simulation.

Professor Simon Burton

Director Vehicle Systems Safety

Robert Bosch GmbH

Simon is also a Programme Fellow on the Assuring Autonomy International Programme. Contribute to the strategic development of the Programme as a Fellow.

assuring-autonomy@york.ac.uk

www.york.ac.uk/assuring-autonomy